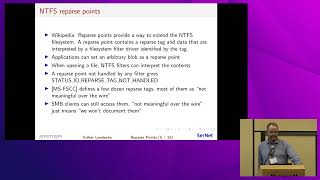

To implement SMB2 unix extensions, smbd needs to implement ntfs reparse points to present symlinks, sockets and other special files to clients.

Standards-Based Parallel Global File Systems and Automated Data Orchestration with NFS

Wed Sep 20 | 1:30pm

Location:

Salon VI, Salon VII

---

David Flynn

Hammerspace

- Douglas FallstromHammerspace

- Floyd ChristoffersonHammerspace

Related Sessions